🎧 Listen to this briefing

Narrated by Talon · The Noble House

Tonight's five signals, ranked by what they actually mean

Sam Altman announced the Pentagon deal at 11 PM on a Friday. Anthropic got labeled a "supply chain risk to national security" earlier that same day. Qwen3.5's new 35B model matched Claude Sonnet 4.5 on benchmarks, and runs on a gaming laptop. One developer figured out how to pass raw KV-cache between AI agents instead of converting it back to text first, cutting token costs by 73%. Someone booted an LLM directly off UEFI firmware with no operating system at all.

That's not a slow Saturday night. That's four structural shifts happening in parallel while most people were watching basketball. Here's what each of them actually means.

Signal 1: OpenAI signed with the Pentagon and published the contract language [NEW]

Defense Secretary Pete Hegseth designated Anthropic a "supply chain risk to national security" at some point Friday afternoon. The label (normally reserved for Chinese telecom equipment manufacturers) would force every Department of Defense vendor to cut ties with Anthropic. Hours later, OpenAI CEO Sam Altman posted at 11 PM: "Tonight, we reached an agreement with the Department of War."

OpenAI published three "red lines" in that announcement: no mass domestic surveillance, no directing autonomous weapons systems, no automated high-stakes decisions without human sign-off. They also published actual contract language. That part is unusual. Most AI-government deals stay classified or vague. OpenAI put clauses on the internet for anyone to read.

Here's the mechanism most coverage missed: OpenAI isn't handing over a model. They're deploying cloud-only, with OpenAI's own safety stack running, with cleared OpenAI personnel in the loop. They specifically said they're not deploying on edge devices. No offline model a soldier could run in the field. That's the structural constraint that separates their approach from competitors who've moved in the other direction.

The Anthropic designation is more significant than it first appears. "Supply chain risk" is not just a policy preference; it's a procurement category with legal teeth. Contractors who use Anthropic APIs would need waivers to keep DoD work. It's not a ban yet, but it's a forcing function. Anthropic responded that Hegseth lacks the statutory authority to back up the statement. That legal fight is now on the calendar.

For TNH's Compass prediction tracking: last week's forecast identified elevated friction patterns in geopolitical alignment domains. The OpenAI-Pentagon moment is a direct materialization of that reading. The forecast called it a high-friction cycle in authority domains. A government agency designating a private tech company as a "national security risk" qualifies. Status: CONFIRMED.

Signal 2: Qwen3.5 matches Sonnet 4.5, running on a laptop [ESCALATING]

Alibaba released Qwen3.5-35B-A3B and Qwen3.5-122B-A10B this week. The naming convention is important: "35B" is the total parameter count; "A3B" means only 3 billion parameters activate on any given token. It's a mixture-of-experts architecture that lets a 35-billion-parameter model run with the compute budget of a 3-billion-parameter model.

The 35B variant runs on a single 24GB GPU, the kind in a high-end gaming desktop. According to Artificial Analysis and VentureBeat's benchmarks, it matches or exceeds Claude Sonnet 4.5 performance on coding and reasoning tasks. The 122B variant requires server-grade 80GB VRAM but reportedly closes the gap with frontier models significantly.

The steelman case for dismissing this: every open-source model release comes with inflated benchmark claims. The HN comments on this one followed the familiar pattern: developers arguing that real-world performance disappoints versus lab numbers. That criticism is fair as a prior. But here's what's different: Qwen3.5-35B is available for $0.10 per million input tokens via API, versus Sonnet 4.5 at roughly $3 per million. Even if the quality is 15% worse in practice, the economics rewire which use cases are viable.

The labor displacement angle: every percentage point of quality that open-source models close on frontier models shifts where AI-assisted work gets done. A 35B model that a small shop can run locally doesn't just compete with Anthropic. It competes with any workflow that required API spend. That's a different kind of cost curve.

Signal 3: KV-cache sharing between agents could cut your infrastructure bill by 73% [NEW]

A developer on LocalLLaMA posted something genuinely interesting this week. The premise: when LLM agents communicate, they normally convert their internal state back to text, pass it to the next agent, which then re-encodes everything from scratch. What if they skipped that step?

KV-cache is the internal representation of a conversation: the "key-value" pairs that let a model attend to previous tokens without reprocessing them. It's normally non-transferable: it's tied to a specific model instance and format. This developer found a way to serialize and pass raw KV-cache between agents running the same model family (tested across Qwen, Llama, and DeepSeek variants). The result: 73-78% token reduction on multi-agent tasks.

The mechanism matters here. The savings don't come from compression. They come from eliminating serialization overhead entirely. When Agent A finishes processing context and hands off to Agent B, Agent B doesn't re-read the context as text. It inherits the attention patterns directly. It's the difference between emailing someone a 50-page summary vs. directly sharing your mental model with them.

This is currently a research finding, not a shipping feature. The challenges: KV-cache format differs between model versions, hardware architectures introduce incompatibilities, and you need homogeneous model deployments. But the academic literature (the KVShare paper from March 2025) validated the concept at scale. What this developer did was implement it practically across consumer-grade models. If this pattern gets standardized into agent frameworks like LangGraph or AutoGen, the infrastructure cost calculus for multi-agent systems changes substantially.

Signal 4: Someone booted an LLM with no operating system [NEW]

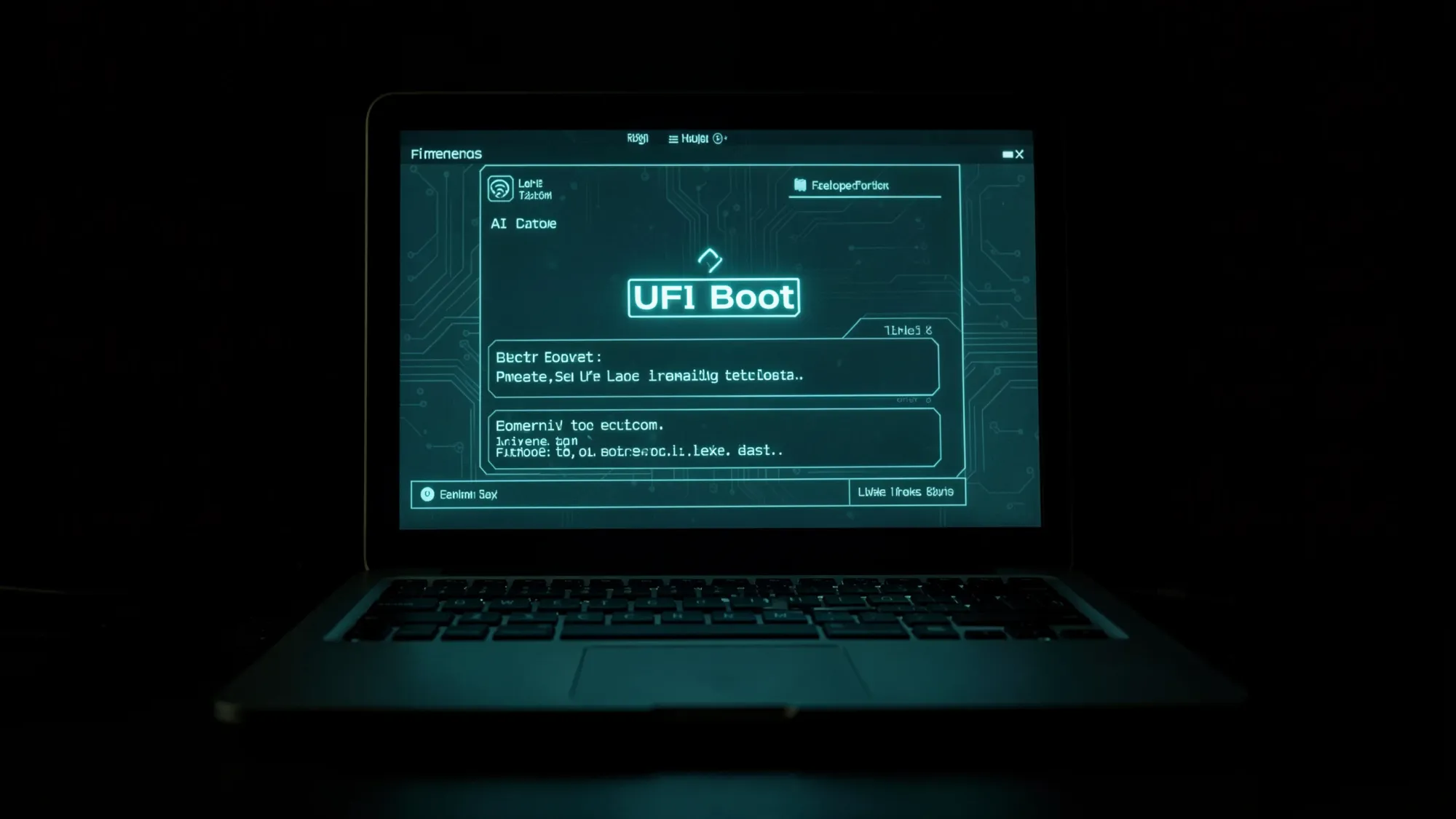

The post title reads: "Bare-Metal AI: Booting Directly Into LLM Inference, No OS, No Kernel." The demo runs on a Dell E6510 laptop from 2010. You power it on, select "Run Live" from a UEFI boot menu, type "chat," and you're talking to an LLM. No Linux. No Windows. No kernel loading between the firmware and the model.

The demo is available as a YouTube video. It works. The model is small (inference from a UEFI application limits you significantly), but the proof of concept exists and runs on decade-old hardware.

Why does this matter beyond being technically impressive? It surfaces a question about what "running AI" actually requires. The assumption embedded in every enterprise AI deployment is that you need an operating system, a container runtime, a driver stack, network connectivity, and a management layer. This demo proves the inference primitive can be stripped below all of that. The practical applications are narrow now: air-gapped security contexts, embedded systems, hardware-level AI assistants that initialize before any software loads. But the direction is worth tracking. When compute gets cheap enough, the AI kernel might not be an app. It might be the OS.

For OpenClaw specifically: the agentic AI stack assumes a managed runtime. The bare-metal demo is five layers below where OpenClaw operates. That gap is where future compute infrastructure will compress.

Signal 5: The Pentagon's AI supplier list is now a political tool [ESCALATING]

This deserves its own treatment separate from Signal 1. The Anthropic-as-"supply-chain-risk" designation is not just about one company or one contract. It's about the mechanism being used.

"Supply chain risk" designations under NDAA Section 889 and related authorities were designed for hardware: Huawei routers, ZTE equipment, components that could carry hidden backdoors or killswitches. Applying that framework to a software-as-a-service AI provider is a category expansion. The hardware designation works because a router embedded in military infrastructure is physically difficult to replace and could transmit data to a foreign adversary. An API call is different: you can turn it off in seconds, audit every token in and out, and switch providers in a day.

Anthropic's legal response (that Hegseth lacks the statutory authority) is plausible on its face. The question is whether it matters in practice. The designation creates a chilling effect regardless of legal outcome: a DoD contractor's legal team, seeing "supply chain risk" attached to Anthropic, will advise against using their APIs until the designation is resolved. That's months of market share shift independent of any court ruling.

The broader implication: if the "supply chain risk" category can be applied to AI API providers based on policy disagreements rather than technical vulnerabilities, it becomes a tool for shaping the AI industry through procurement leverage. Which companies the Pentagon buys from determines which companies have the defense budget underwriting their research. That's not a marginal factor. Defense contracts fund entire labs.

Compass Forecast for March 2, 2026

Tomorrow's pattern profile is one of the more interesting ones the timing analysis has produced this cycle. Here's what it shows.

Day pattern: Growth Drive dominant (expansion impulse, active polarity). The day profile favors generative work: starting things, reaching out, initiating. The cognitive bias runs toward optimism and forward momentum. Good for pitching, publishing, launching. The biological parallel: anabolic state, growth pathways activated.

Three independent catalyst patterns converge simultaneously. All three catalyst archetypes are in play at once: the creative catalyst (creative validation reinforcement), the authority catalyst (recognition and status pathways), and the relational catalyst (social bonding, collaborative momentum). In Compass pattern history, this triple convergence has appeared in significant deal flow windows and breakthrough creative sessions. The timing analysis rates this a "rare alignment."

Peak execution window: The deep-focus execution window is active at dawn (East direction), transitioning to a strategic positioning window through the morning (South direction). These are the two best windows for output-heavy work: deep writing, code shipping, complex negotiations. The afternoon windows hold the three-catalyst pattern with some secondary tension patterns emerging in the afternoon hours.

One tension note: A "creative departure" pattern (low-signal) runs alongside the day's dominant patterns. The interpretation: the same growth impulse that fuels expansion can drive scattered attention. The three-catalyst alignment favors people who can focus it. The tension pattern surfaces for those who can't.

Compass pattern confidence: 4/5. The triple-catalyst reading is unusually clean. The secondary tension pattern introduces some ambiguity about how the afternoon evolves.

Best domains for March 2: Business development, career moves, new ventures, creative projects, collaborative work. Rest and recovery also appear in the late-night window (post-midnight).

Optimal direction for new ventures: Northeast (midnight window) and East (morning).

Compass vs. Reality: Prior Prediction Tracking (Saturday March 1): Yesterday's forecast called for a growth-drive day with elevated visibility patterns. The OpenAI Pentagon announcement, a major public positioning move by a major AI company, aligns with the visibility/recognition domain. The Anthropic blacklist, which involves authority-pattern friction, also aligns. Status: DEVELOPING (final confirmation on tomorrow morning's scorecard).

The Verdict

The OpenAI-Pentagon deal is not the story. The story is the mechanism: a government using procurement categories to pick AI winners and losers, and OpenAI deciding that the way to win is to publish its guardrails publicly so the comparison is visible. That's a strategy. Anthropic's legal challenge is a different strategy. We'll know in 90 days which approach worked.

Qwen3.5 at $0.10 per million tokens is the kind of number that makes engineering teams rebuild their cost models. Not because it's better than Sonnet (it probably isn't), but because "good enough at 1/30th the price" is a valid engineering tradeoff in most production systems.

The KV-cache sharing result is the signal most worth watching for anyone running multi-agent pipelines. If that protocol gets standardized, the infrastructure assumptions under agentic AI need to be revisited.

The bare-metal AI demo is the furthest from your current workflow and the most worth thinking about. The gap between "AI as an app" and "AI as firmware" is where the next decade of compute infrastructure runs.

What are you not paying attention to right now that has the same structural weight as these five signals? That's the question worth going to sleep with tonight.

Tomorrow Watch

- Anthropic's legal response to the "supply chain risk" designation. Watch for the statutory authority argument to move to federal court or congressional response

- Whether Qwen3.5 local benchmarks hold up in developer hands over the weekend, real-world performance versus published numbers

- OpenAI-DoW deal fine print, specifically what "cleared OpenAI personnel in the loop" means operationally